Unlock Your Data's Value With Address Normalization

What is Normalization?

Data normalization is a term you might not be familiar with, but it is key to the success of virtually every matching exercise, and is in fact fundamental to database theory in general. In essence its the science of "you say pot-tay-to and I say po-tah-to". We want different expressions of the same thing to match.

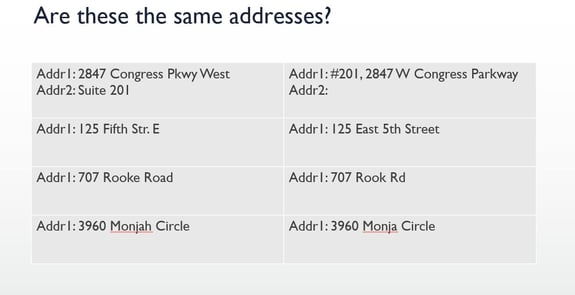

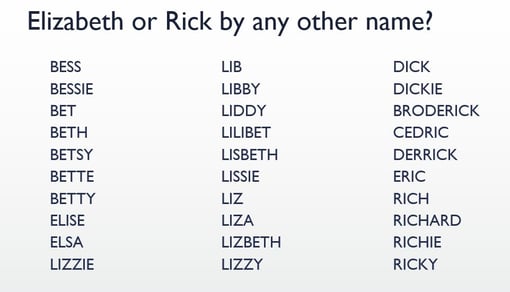

There are countless data normalization opportunities in your data. Phone numbers, like “(604) 437-7873” vs. "+1 604 4377873" or "604-437-7873"; names, like "Richard" vs. "Dick"; or addresses, like "210-123 W Ridge Rd." and "123 Ridge Road West, Appt. 210". Even "Richard" vs. "RICHARD" can be a problem. These examples all obviously represent the same things, but still can sometimes be very hard to match.

The biggest single failure point in most scripts is the Esc key inadvertently getting pressed. This is why Arbutus allows you to turn off the Esc key, with SET ESC OFF. When your script is running, pressing Esc will be ignored. Just as importantly, if you have a dialog command for user input, then the 'Cancel' button is also disabled. There is no way for a user to interrupt the script through either of these methods.

Example:

The Value of Normalizing

It's hard to quantify the value of data normalization, except that it makes finding duplicates and matches easier. It's only when you look at specific examples that the value appears. Finding duplicate customer or vendor records might simply reduce the size of your files (which is good), but by combining the information, you may gain better insights into your data relationships, save on mailing costs, or even detect fraud (which may be more valuable).

Matching external databases to your own files can be even more valuable. You might be comparing your customer data with your web site applications, to sync the information. Even more compelling, you might be comparing customers, vendors, or employees to various watch-lists, to assess the risks. You just don't know the value until you look, but it's almost certain to have more value than the near-zero cost of looking. Even the confidence of knowing there are no matches can have value.

An Approach to Normalizing: Remove Insignificant Detail

Data normalization is key whenever you are blending data (comparing, joining, …), particularly data from different sources. One useful approach with addresses is to eliminate as many insignificant differences as possible. This lets you (and the processing) focus instead on what's significant.

For example, you might want to match your customer file with addresses that you purchased, or from a watch list, or perhaps from your own web site. It's quite likely that the different data sources may not have recorded the addresses exactly the same.

You might also be looking for duplicates in a single database. In this case, there may have been accidental duplications, or intentional duplications, perhaps to commit a fraud. In the latter case, they may have intentionally made the addresses different, to better hide the activity.

Address Normalization

Address normalization is particularly important when we are addressing the risks that arise when vendors, employees, and watch list entities may share the same addresses.

What's Significant?

OK, what exactly do we mean by insignificant differences? Capitalization, punctuation and the like are obvious (and important), but they are pretty easy to deal with. More challenging are abbreviations and “noise” words. The address "123 Main Street" and "123 Main St." clearly refer to the same address, but matching them automatically can be challenging. Similarly, just imagine trying to match the identical addresses "210-123 W 3rd Ave." and "123 Third Avenue West, Appt. 210". Normalizing is a way of handling this complexity.

Implementation Issues

Matching addresses is hard and addresses present unique issues. First, there are literally hundreds of abbreviations in addresses (street/st, road/rd, etc.) that should be standardized. The list we use from the US Postal Service (USPS) includes about 500 variations. There are also many 'noise' words (appt, unit, floor, Suite …) that may not be significant to the interpretation of the address.

Second (and very importantly), you need to apply this analysis on a “whole word” basis. If you simply replace, “ROAD” with “RD” then “BROADWAY” becomes “BRDWAY”, and if just you omit noise words like “FLOOR” then “JONES FLOORING” becomes “JONES ING”. Third, it is important to note that the components of each address might be in a different order, as in the example in the previous paragraph.

How Arbutus Helps

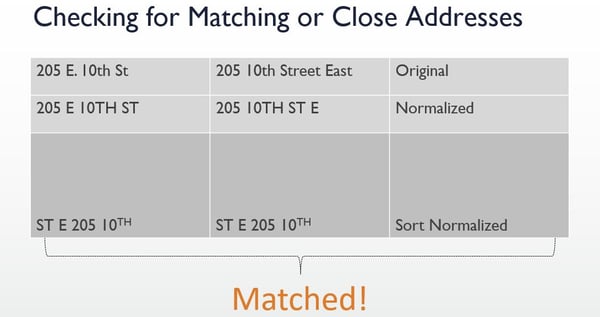

Without the right tools, creating rules that correctly handle the issues noted above can be more challenging than you might think. The goal, as with most tasks, should be 'simpler is better', and this is where Arbutus comes in. Arbutus can automatically perform all of the normalizations noted above, and more. Two addresses as different as "#200 - 1234 W Main Street" and "1234 MAIN ST. WEST, Suite 200" match perfectly, every time, and with just one command!

Example:

The Normalize and SortNormalize functions within Arbutus Analyzer automatically apply a wide variety of techniques, including those mentioned here, ensuring maximum performance for your data blending analytics. A computed field as simple as: SortNormalize(address, “USPS”) will accomplish this powerful normalization, which can then be used to dramatically improve your results when matching addresses.

Analyzer’s proprietary normalization technology is fully user configurable, and automatically whole-word based. It allows either the replacement or elimination of any words or abbreviations. It is also designed for industrial use, so you can easily handle hundreds (or even thousands) of distinct terms, in datasets with tens or even hundreds of millions of records.

You can update your lists at any time, to reflect experience or new ideas. You can also create separate lists for each distinct type of normalization, whether by area, language or whatever the need. You can even reference other important external lists, such as watch lists or other relevant content, to leverage the knowledge of others.

Finally, and very importantly, the lists you use to normalize addresses are totally separate from the scripts that use them. This means you can update, refine or add to your criteria at any time, without changing any scripts.

Analytics By Business Process

Learn more about various analytics tests to meet business needs.

Analytics By Business Process

.webp?width=296&height=131&name=arbutus_AI-Powered-Advanced-Analytics%20(1).webp)